Back to Blog

the network engineer's case against autonomous ai 10 min read

Why Network Engineers Still Don't Trust AI With the Network

We'll let AI write production code and draft legal contracts. Ask it to touch BGP on a core router and the room goes quiet. Here's why that instinct is correct.

Stephen Stack

CTO, rConfig

# Why Are Network Engineers So Conservative When It Comes to AI on the Network?

I've spent twenty five years in networking. Most of those years followed the standard arc: enterprise architecture at Dell, global infrastructure work in financial services, a stint as CTO of Microsoft Services at EY, and now running rConfig. The last two years have been different. I've been deep in the agentic AI weeds, building production Laravel and Vue applications with AI assistance, supervising long-running agent workflows, and watching very closely what these tools can and cannot do across hundreds of real engineering tasks.

So here's the thing I keep noticing.

We'll happily let AI write production code. We'll let it draft contracts, summarise medical scans, refactor entire microservice fleets, write SQL against production data, and ship features over a weekend. But suggest that an AI agent should be allowed to push a route map change into a production core, and suddenly every network engineer in the room becomes a 1998 firewall admin clutching a console cable.

I want to talk about why that is. Because I think network engineers are right to be cautious, and I think the AI industry has barely begun to take their objections seriously.

## The network is different, and engineers know it

When an application breaks, an SRE team rolls back a deployment. When a database query goes wrong, the DBA kills the session. These are bounded blast radii. Painful, sometimes career defining, but bounded.

When a network breaks, everything breaks. Voice, ERP, security tooling, VPN, cloud connectivity, identity providers, monitoring, the WAN, the campus, the data centre, the internet egress. All of it. Simultaneously.

I've watched a single misconfigured route map silently drop a /16 from a BGP advertisement and partition a global business for ninety minutes. I've watched a junior engineer paste a perfectly reasonable command into the wrong terminal window and isolate a data centre from its own management plane. I've watched MTU mismatches create that special kind of half broken state where ping works fine and nothing else does, and where the operations team spends six hours convinced it's a firewall problem.

Networks are one of the last genuinely stateful, interdependent, timing sensitive systems in enterprise IT. They do not behave like microservices. You can't blue green a BGP session. You can't canary deploy a spanning tree topology. You can't roll back time on a route advertisement that's already been propagated to half the planet's transit providers.

That context matters enormously when you're thinking about where AI should sit in the network operations stack.

## AI is very good at being confidently wrong

Here's the uncomfortable truth I've learned from building with LLMs and agentic systems for the last two years.

These tools are extraordinary at generating plausible output. They are not extraordinary at generating correct output, especially in narrow domains with sharp edge cases. They hallucinate syntax. They invent commands. They confidently mix Cisco IOS, NX-OS, and Junos syntax in the same config block when you're not looking. They collapse vendor nuance into statistical averages.

And here's the thing about networking: nuance is the job.

`neighbor 10.1.1.1 next-hop-self` is harmless in one topology and a route black hole in another. `spanning-tree portfast trunk` is occasionally valid and occasionally the opening line of a four hour outage. A BGP local-pref change can look like a one line cleanup or rewrite the path selection for an entire autonomous system, depending on where in the topology it lands. Redistributing OSPF into EIGRP without filtering. Leaking RFC1918 into an MPLS core because someone got the route target import wrong. EVPN underlay assumptions that hold for forty eight of fifty leaves and break catastrophically on the other two.

LLMs do not have the topology awareness to know any of this. They do not have the operational history. They do not have the tribal knowledge that says "don't touch that ACL, it was written in 2014 by a contractor who's now in New Zealand and nobody knows what application it protects, but the CFO will personally call you if it breaks."

Syntax correctness is the easy part. The hard part is the part LLMs can't see.

## Conservatism in networking is accumulated operational scar tissue

I want to be honest about something the industry does not talk about enough.

Network engineers are cautious because they have been trained through outages. Two AM maintenance windows that ran until five. Bridge calls with thirty people on them and a CIO listening in silence. Typing commands with hands shaking slightly because you know the change you're about to make affects sixty thousand users. The specific flavour of dread that comes with realising mid change that your fallback plan depends on the very link you're about to take down.

If you've never lived through that, the conservatism looks like Luddism. If you have, it looks like wisdom.

This is the part the AI industry tends to wave away. "Move fast and break things" is a perfectly reasonable philosophy when "things" means a feature flag or an A/B test. It's a categorically different proposition when "things" means a hospital WAN during a code blue, or a trading floor during the European open, or a manufacturing plant's PLC network in the middle of a production run.

Network engineers are not resistant to innovation. They're resistant to people who don't carry the pager telling them how to do their job.

## AI has no consequences, and that's the actual problem

Here's the centerpiece argument I want to make, and I think it's the one the industry is structurally incapable of confronting.

AI does not get fired. It does not get paged at three in the morning. It does not sit on the outage call explaining to the COO why the call centre was down for forty minutes. It does not lose customer trust. It does not write the post incident review. It does not feel the slow burn of professional reputation damage that comes from being the person whose name is attached to the change ticket that broke production.

Humans absorb all the blast radius. Every bit of it.

So when you're a network engineer being told to trust an autonomous agent with your production estate, the asymmetry is glaring. The agent has nothing to lose. You have everything to lose. And the vendor selling the agent typically has a support contract that explicitly excludes consequential damages.

This is not a technology problem. It's an accountability problem. And it's not one you can solve with a better prompt or a larger model.

## Full autonomous networking is further away than the hype suggests

Let me push back on the AI-runs-the-network narrative directly.

Enterprise networks are not homogeneous. Documentation is incomplete on a good day and actively misleading on a bad one. Intent is rarely explicit, it lives in the heads of three or four senior engineers who've been there a decade. Configs drift. Legacy protocols persist for reasons that made sense in 2008 and now nobody remembers. Edge cases dominate reality.

Every real production network I've worked on contains: at least one piece of hardware running an IOS version that hasn't seen a security patch since the last World Cup, a "temporary" static route from 2017 that's now load bearing, a vendor quirk that violates the RFC but works around a bug in someone else's vendor quirk, an undocumented failover behaviour that only manifests during a specific failure mode nobody has tested in years, and a VLAN that does something important for a reason nobody can articulate.

The network is a living archaeological site. AI agents that assume clean state, complete documentation, and explicit intent are going to crash into the reality of that archaeology very hard.

## What AI will actually do for networking

That said, I'm not a pessimist on this. I run a network configuration management platform. I've spent the last eighteen months building AI assisted tooling into rConfig. I am bullish on where this goes.

But the shape of the win is different from the headline.

The realistic future of AI in networking is assistance, not autonomy. Reading configs at a scale humans cannot. Spotting drift across thousands of devices. Identifying risk in proposed changes. Generating remediation proposals with explicit consequence analysis. Explaining what a twelve year old config block actually does. Validating policy compliance. Simulating impact before deployment. Catching the kind of human mistakes that cause outages: the missed semicolon, the wrong subnet mask, the route map applied to the wrong neighbour group.

These are enormous wins. They will reduce outages, shorten MTTR, preserve institutional knowledge, and make network engineers measurably more effective. None of them require an autonomous agent with write access to production.

## The human governed model

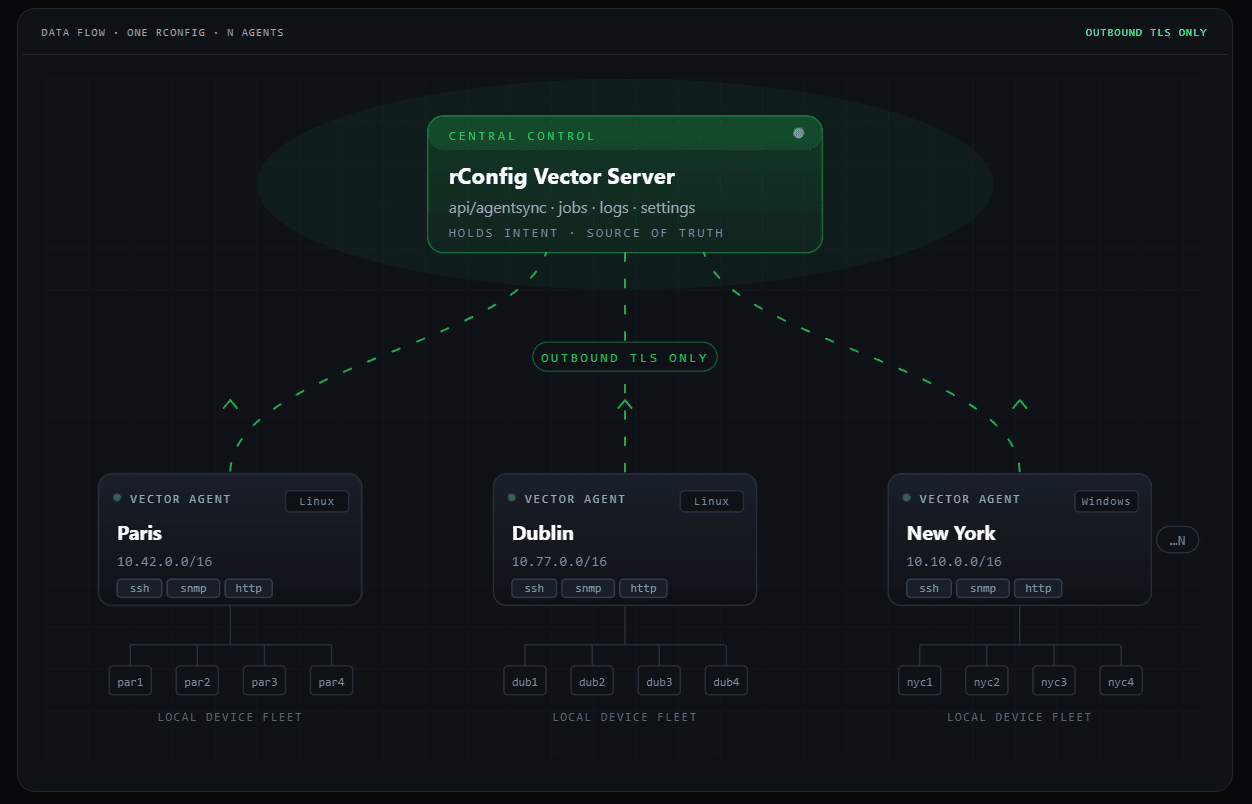

Here's where I think the industry is actually heading, and where we're building at rConfig.

AI reads. AI analyses. AI proposes. AI explains. AI simulates. Humans approve intent, own blast radius, and control deployment.

A concrete workflow: an engineer notices a routing anomaly. An AI agent pulls the relevant configs, the topology, the recent change history, the routing tables, and the historical baselines. It identifies probable cause and proposes a remediation. It generates the change plan with explicit risk scoring, affected paths, rollback steps, and policy validation attached. The change goes through a review workflow with all of that context inline. A human reviews, questions, modifies, approves. The deployment runs through controlled automation with post change validation built in.

The AI becomes a force multiplier. It does the parts humans are bad at, which is reading ten thousand lines of config in thirty seconds and remembering every change made in the last six months. The human does the parts AI is bad at, which is understanding business context, weighing political risk, and owning the consequences.

This is not a compromise. It's actually a better operational model than either humans alone or agents alone.

## The irony I keep coming back to

The funniest thing about all of this is that the most conservative network engineers I know are probably going to become the biggest AI adopters in the next five years.

Not because they've capitulated to the hype. Because once trust is earned, the value proposition becomes undeniable. If AI can genuinely reduce outages, catch mistakes before they hit production, preserve the tribal knowledge of senior engineers who are retiring, and shorten the path from "something is wrong" to "here's what changed and how to fix it", then the same engineers who are sceptical today will be the ones quietly running the most sophisticated AI assisted operations tomorrow.

But trust has to be earned. It cannot be marketed. And it definitely cannot be earned by vendors who present autonomous network agents as ready for production when they demonstrably are not.

## Closing thought

The network is not just infrastructure. It's accumulated operational history, political compromise, undocumented behaviour, and institutional scar tissue encoded into CLI syntax and YAML files. It's the most stateful, most consequential, least forgiving environment in enterprise IT.

That's why network engineers are cautious about AI.

And honestly, they should be. The engineers who survive twenty years in this profession survive because they earned every ounce of that caution. They built it from outages, from war rooms, from change windows that went sideways, from the specific kind of professional pain that only comes from being the person whose change broke something important.

The AI industry would do well to listen to them, rather than dismiss them.

The future of AI in networking is genuinely exciting. But it belongs to the vendors and platforms who treat network engineers as the experts they are, and who design AI systems to amplify their judgement rather than replace it.

That's the bet I'm making at rConfig. And it's the bet I'd encourage anyone else building in this space to make too.

About the Author

Stephen Stack

CTO, rConfig

Stephen is the creator of rConfig and a veteran network automation engineer with over 15 years of experience. He is dedicated to solving the complexities of network management through open-source innovation and enterprise-grade tooling.

Follow on LinkedIn